chatgpt

Control Windows with Your Voice and the Magic of ChatGPT

Uses voice-to-text transcription and code generated by ChatGPT to have your computer listen to your every command!

chatgpt

Uses voice-to-text transcription and code generated by ChatGPT to have your computer listen to your every command!

openai

Provides guidiance on how to reduce latency when working with the OpenAI Whisper API

ruby

In order to try out the Fastlane tool, I needed to install Ruby on my Windows development machine today. The process took me a few attempts, so I thought I share here some of the mistakes to avoid and how to get everything running. How to Install Ruby The easiest

gcp

Cloud Functions provide a lightweight execution environment for code in various languages. They are the serverless compute solutions in Google Cloud Platform (GCP) that is similar to AWS Lambda functions in AWS. There are two different versions of Cloud Functions available: 1st Gen and 2nd Gen. 2nd Gen is recommended

esbuild

esbuild plugin that allows ignoring specific files by adding comments into the source code.

coding

There are many different ways to provide styling for React components, such as importing plain CSS, using styled components, JS-in-CSS or CSS Modules. These all have various advantages and disadvantages. To me, it seems CSS Modules provide the best solution overall for beginner to intermediate use. We can use the

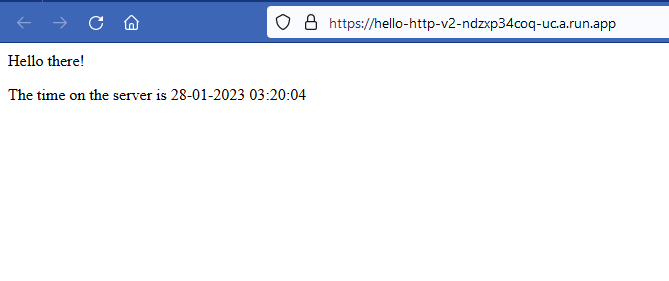

aws

AWS HTTP APIs provides a new way to deploy REST-based APIs in AWS; providing a number of simplifications over the original REST APIs. However, when working with HTTP APIs we need to be aware of a few gotchas, such as what types to use for handler arguments. REST APIs also

aws

I have long been very sceptical of so called NoSQL databases. I believe that traditional SQL database provided better higher level abstractions for defining data structures and working with data. However, I have received a few queries for a DynamoDB template for my project builder Goldstack and I figured a

amazon-route-53

AWS S3 has long been known as an effective way to host static websites and assets. Unfortunately, while it is easy to configure an S3 bucket to enable static file hosting, it is quite complicated to achieve the following: * Use your own domain name * Enable users to access the content

aws

AWS S3 is a cloud service for storing data using a simple API. In contrast to a traditional database, S3 is more akin to a file system. Data is stored under a certain path. The strengths of S3 are its easy to use API as well as its very low

aws

Amazon Simple Email Service (SES) is a serverless service for sending emails from your applications. Like other AWS services, you can send emails with SES using the AWS REST API or the AWS SDKs. In this article, I want to look at how to send emails using SES with TypeScript

aws

Next.js 12 has been released a few months ago. It is a major update to the Next.js framework that includes a lot of new features and improvements. Goldstack provides two templates based on Next.js: Next.js Template for building vanilla Next.js applications Next.js + Bootstrap Template